Quick question:

What’s the difference between a model and a theory?

Quick answer:

While, a theory explains a phenomenon, a models simplifies a phenomenon to better understand it. Both are connected: models can lay the foundation for a theory while a theory can in turn be used to create a model.

I’m not yet at the stage to discus theories. Right now, I’m trying to create, use and test models to understand my topic. So, this article will be mainly about that. French education oblige: there’s a theoretical part first and then I expose my current issue. No worries, I keep it short.

About models

Let’s resume: you can model a house with lego blocks or you can do it with a blueprint. What is the best model? Well… Depends on your purpose. Many models might exist for different purposes. A model is both capacitating and incapacitating. In other words, sometimes they can be useful but they will never perfect. The more it is known about the limits, the more they can be used wisely.

One of the general limits of a model is that it shapes the way you think about a problem. It gives a biased view on the world.

For instance, Michael E. Porter developed in 1979 a method, the “Porter's Five Forces Framework” in order to determine the profitability of an industry. I won’t dell on the details, the Wikipedia page is well made. Case in point: OK, it helps to think on how to evaluate your competitive intensity in a given industry, but how about changing your product so you address another market? Instead of adapting to a competitive context, you can move to a better environment.

Another example: the movie Arrival1 (2016) directed by Villeneuve illustrates it very well in a sci-fi setting. I’m stopping here to avoid spoiling. Those who know the movie might see my point.

But how-to?

To criticise a model is quite easy. To make one is much more harder.

As I’m looking at how people make AI tools for customised healthcare, I try to categorise the different key points they report: the data they used, the different problems and limits they meet, the solutions they find to circumvent some of them… But how to make sense of all of it?

One of the first frameworks was to categorise the elements (issues or solutions found) with three labels: data collection, data exploitation and use. Actually, my previous article “The fog in a PhD” was partially build on this segmentation of the problem. In short, I used the formula f(data) = knowledge to model it and derivate the three mentioned categories.

The limits quickly became evident.

AI solutions are problem-based. You don’t collect data or build an algorithm out of the blue. You tend to answer a problem, or at least you have a general idea behind. As it is problem-specific, many of the issues or solutions result from a combinations of factors. In other words, there is a history of the project, or a sort of technical debt2 you accumulate and carry.

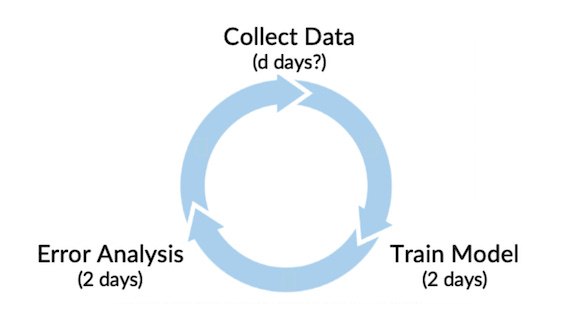

There are many other steps that just “gathering data”, “making an AI” and “using it”. Before that, you make apparent a problem you want to answer; collecting data can be made several times along the project and in different ways (or by different people); you tend to pre-process this data in most of the cases to “clean” it (meaning to transform some of the values, to mange the missing ones, etc.) but those operations are deeply linked to algorithm you will use just after, etc. All in all, many different steps, or sub-steps, appear in the process and it is not always linear. Sometimes you go backwards and iterate. On this matter, Andrew Ng (one of the head figures in AI) recently started to promote a data-driven way of thinking instead of a algorithm-oriented one with many loops to collect better data according to the results from the AI tool.

Instead of a steps-centric approach (e.g. problem definition - data collection - data processing - evaluation - use), a issues-centred model could work better (managing small data - ethical issues…)? The main difficulty with the “process view” it that some key-points arise at one step but are answered or come back at another one: small data issues can eventually be corrected latter on at the processing phase or can be a burden when generalising the tool. So where should I classify those issues?

The new model I should create needs to answer to my intention of use. My focus right now it to understand the AI tools in customised healthcare. Yet, it doesn’t seem precise enough to foresee the form the model should take. I’m still working on it as I keep piling new information. I have the hunch this could become one of the key cornerstones in my PhD. Wait and see!

Also, I’m open to suggestions! Depending on how it is going, I might open a “thread” on the building of this model (an alternative that seems more interactive than the classic “article + comments”).

That’s all for now, I’m busy knocking my brains out at the moment, but it’s fun. See you soon!

Based on Ted Chiang’s novella “ Story of Your Life” for the book lovers

“sort of” as it is not only technical, sometimes the way to look at the problem in the beginning will heavily influence the rest of the project.

I am not sure to understand where the "two days" in Andrew diagram come from. Training can take only few second or some week depending on what you want to analyse (the frequency of word in a book vs the whole universe).