Making predictions can be fun since they usually become irrelevant. So, here and now, for the beauty of your eyes, I will show you my divination skills ✨ (I'm putting a reminder to read this post in a couple of years to see how wrong I was).

But beyond the predictions, what’s interesting is to explicit the different trends observed. That’s why, please, feel free to comment to give your input to enrich the conversation.

The Indian summer is “any spell of warm, quiet, hazy weather that may occur in October or November” according to weather historian William R. Deedler (according to Wikipedia, I was too lazy to double check1). With my current readings, I wonder if AI and Data Science is knowing this kind of spell before a new winter.

As it’s well documented by Russell and Norvig2, AI History is full of cycles with “AI winters” and sudden breakthroughs leading to a new developments, until the next winter. Well, it would be ill-advised to predict future trends based on past ones as there is always a part of unknown and novelty which shuffles the cards. Yet here I am.

Everything looks good now.

In 2011, a “Deep Learning AI” wave started with a breakthrough in traditional records for image recognition. Deep Learning is a subfield of Machine Learning, an approach to AI, but not the only one. It’s based on Big Data, meaning, the more data you have, the better your algorithms should be. The Deep Learning wave is closely related to data (Big Data, very Big Data!) and thus to data engineers, analysts and scientists which create the infrastructure and use the AI tools.

In the academic filed, AI has won a power of attraction seen by the increase of scientists, students, publications and money invested into it, as the AI100 reports.

In business, AI has gotten out of the lab and many startups and business applications have appeared. Many indicators estimate related markets growth. Firms are looking for data scientists and so on.

Countries like China, the US and France try to become leaders of the AI field and invest massively on it by different means, giving more money to academics, reducing taxes to promote AI research in firms, creating infrastructures to collect data, etc.

Hence, it would appear that AI has become a thing for many years to come. At least, it’s certain that the investments made will still be running for some years.

Yet, some small signals tell another story…

In the academic field, Daniel Lemire summed up in an article, “Peer-reviewed papers are getting increasingly boring”, the misconception about investments implying outputs. On the contrary a higher investment could just relate on a higher cost of production (of knowledge in this case). The increase of scientific publication is undermined by the marginal contributions. For instance, many articles propose a twist in a previous algorithm to gain a marginal increase of performance. This problem is not only in AI, but in many fields as there is an underling structural issue at stake. However, the high investments in AI tend to increase the pressure without solving the bottleneck. An “intellectual” speculative bubble of sorts.

In society, it’s still not certain to which extent it could bring benefits. And yet:

More and more limits of the AI are highlighted in terms of ethics, actual precision, etc., coupled with an increase of regulations.

There certainly might be many “overpromise-underachieve” applications promoted by startups or pursued by big companies. Some of them clearly lie in order to attract investors or sell their products. But it’s a question of time before those illusions stop working.

Some politicians divert this trend to push their agenda for very short-term gains as Klaus Hoeyer shows in “Data as promise: Reconfiguring Danish public health through personalized medicine”.

That’s why, there might also be an actual economic bubble ready to burst.

The same way it append for the AI winter of the mid-80s with the “expert systems”, the burst of the academic and business bubbles could lead to a setback in the AI filed.

So, winter is coming? Or global warming saves us all?

However, this AI wave is creating many byproducts like the data-driven decisions paradigm, Big Data infrastructures, new jobs and skills around data and so on. Even if they came form a bubble, those byproducts will still remain and create new possibilities.

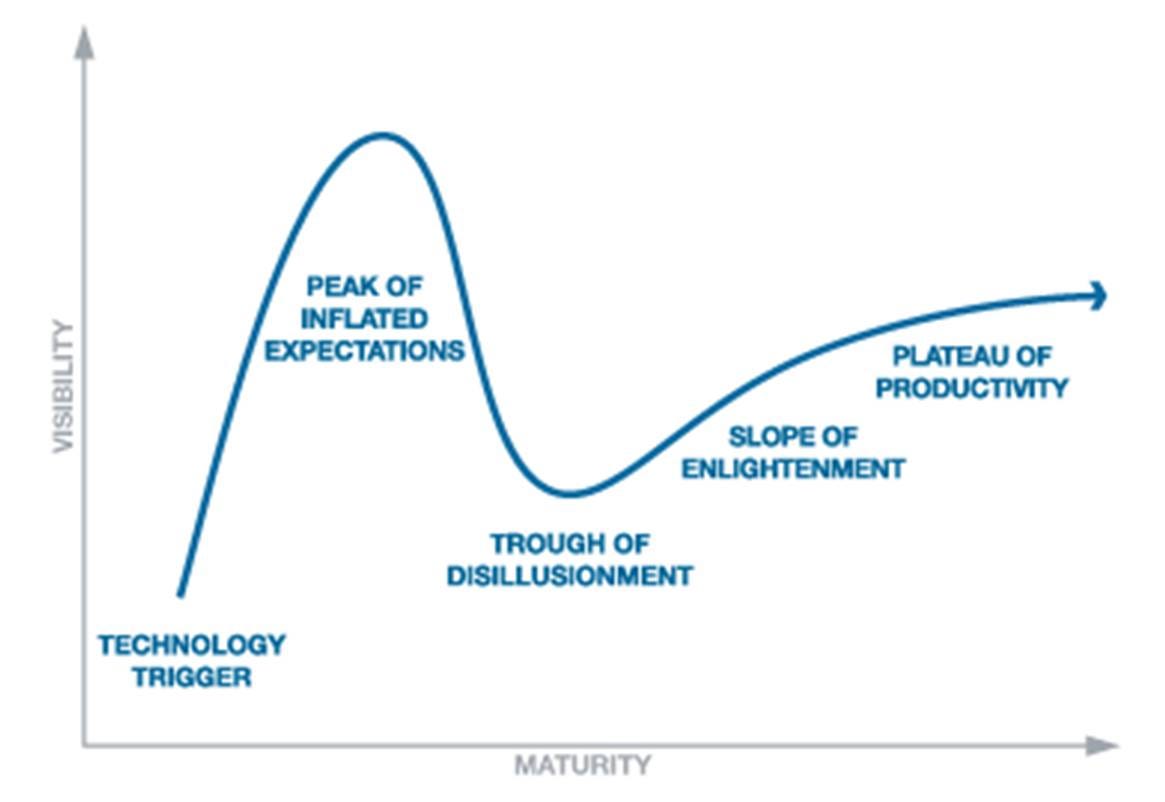

The Gatner’s curve models an interesting phenomenon: new technology, hype, disillusionment and then new usages3. The main point is that a new technology is usually used at first to reproduce the existent ways of doing in hopes it will improve them. However, in many cases the new ways are not able to make the switch. Then, latter, people with a bit of creativity use this technology to do new things, unbound by the old ways of thinking. For AI, maybe after the hype passes, people with different approaches will find interesting ways to exploit this technology.

Maybe there is no bubble and current expectations on AI will be realised in due time… Also, currently, in AI some new ways to implement those technologies are emerging like ways to better understand what patients say. With the increase of pace in innovation, they could appear before the bubble burst/the disillusionment, allowing a smother transition between current hopes and the actual potential of the technology.

The topic is much lager than the few points I made; discussions could go for hours as there are many examples, counter-examples and divergent opinions. I just tried to summarise some key points but feel free to add yours!

Science 0 - 1 CopyPasta

Artificial Intelligence: A Modern Approach, 4th edition, 2020, by Stuart Russell and Peter Norvig

Warning: I’m only interested in the main mechanism. I don’t brainlessly generalise it.

Hi,

Nice post. I would precise that "The Deep Learning wave is closely related to data" (how do we quote here ?) is not always true.

The AI that Google use to win against the grand master of go, few years before, train with lot of data (a dataset of many games of go). But his little brother (which win against him) train himself by "playing alone" with himself and so need zero datum.

Perhaps one day we’d be living in a world of cyborgs and witness how humanity would be defeated by zestful scrutiny & control from the misuse of AI & big data

https://www.google.com/amp/s/amp.ft.com/content/50bb4830-6a4c-11e6-ae5b-a7cc5dd5a28c